In the rapidly evolving world of artificial intelligence, new advancements seem to emerge almost daily. One of the most exciting breakthroughs in recent times is the Kimi K2 technical report, released by Moonshot AI. This cutting-edge model promises to push the boundaries of what AI can achieve, setting new benchmarks in areas like coding, mathematics, and reasoning. In this article, we’ll explore the key aspects of the Kimi K2 model, examine the innovations detailed in its technical report, and discuss the significance of its contribution to the AI landscape.

What Is Kimi K2 and Why Is It Important?

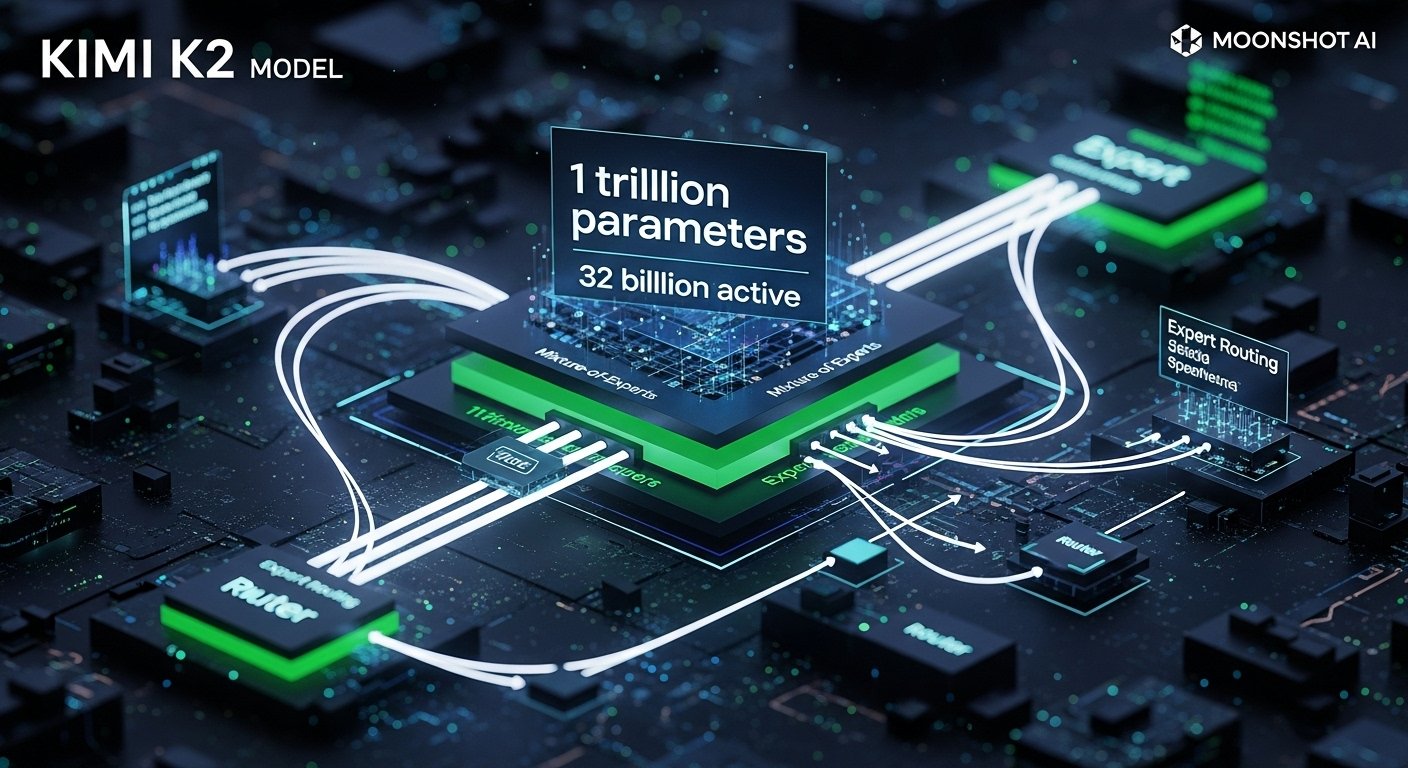

The Kimi K2 model, developed by Moonshot AI, is an advanced large language model (LLM) based on a Mixture-of-Experts (MoE) architecture. This innovative model boasts a remarkable 1 trillion parameters, with 32 billion activated per token, enabling it to achieve groundbreaking performance on a range of AI benchmarks. By harnessing this scale, Kimi K2 excels in tasks such as code generation, mathematical reasoning, and autonomous problem-solving.

The release of the Kimi K2 technical report is significant because it not only demonstrates the capabilities of a trillion-parameter LLM but also showcases the integration of reinforcement learning (RL) and agentic data synthesis, enabling the model to perform real-world tasks more autonomously.

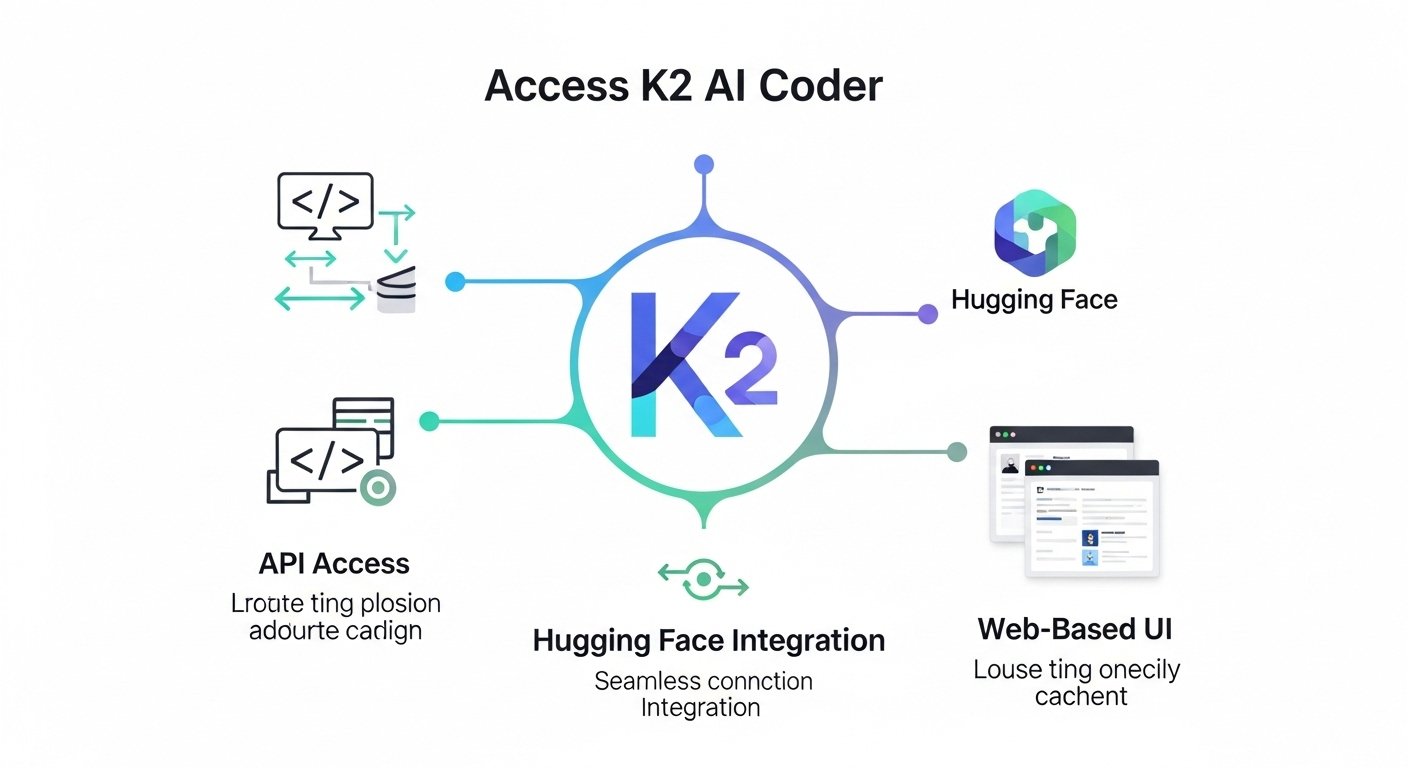

But why should AI researchers, developers, and businesses care about this release? For one, the model’s open-source nature means that the Kimi K2 model is more accessible than many closed-source alternatives, enabling widespread innovation. By diving into the technical report of Kimi K2, we can better understand how the model works and its potential applications.

Overview of the Kimi K2 Technical Report

The Kimi K2 technical report offers an in-depth exploration of the model’s architecture, training methodologies, and performance. One of the key highlights is the Mixture-of-Experts (MoE) design, which is a sophisticated approach to managing such a large model. But beyond just the architecture, the report also outlines how Moonshot AI took several bold steps to enhance the training process, making it both more efficient and effective at handling real-world tasks.

Let’s break down some of the major components covered in the report.

Kimi K2 Model Architecture: Mixture-of-Experts (MoE)

What Is MoE and How Does It Benefit Kimi K2?

At the heart of the Kimi K2 model lies the Mixture-of-Experts (MoE) architecture. In a typical neural network, all parameters are used for each token processed. However, in an MoE architecture, only a subset of the model’s parameters is activated at any given time. For Kimi K2, 32 billion out of the 1 trillion parameters are activated per token, making it an incredibly efficient model despite its massive size.

The benefit of MoE is twofold:

-

Efficiency: By activating only a small subset of parameters, the model can maintain its scale without requiring excessive computational resources for every task.

-

Scalability: The model can scale to massive sizes, handling more complex problems that traditional architectures might struggle with.

This approach is why Kimi K2 can excel in tasks that demand both high scalability and fast processing times, such as advanced programming or solving complex mathematical problems.

Training Techniques and Innovations in Kimi K2

Novel Optimization with MuonClip

Training a trillion-parameter model like Kimi K2 comes with its own set of challenges. The Moonshot AI team introduced an innovative optimizer called MuonClip, which builds upon previous optimizers like Muon. The primary goal of MuonClip is to prevent “loss spikes” or training instability, a common issue when dealing with such large models.

The MuonClip optimizer uses a technique known as QK-clip to maintain stability during training. This allows Kimi K2 to be trained at massive scales without experiencing the errors or instability that often plague large language models.

Reinforcement Learning (RL) for Enhanced Autonomy

Another groundbreaking feature of the Kimi K2 model is the integration of reinforcement learning (RL) in the training process. In Kimi K2, RL is used to simulate real-world interactions, allowing the model to develop the ability to solve problems autonomously.

Moonshot AI uses synthetic agentic data to train Kimi K2 in an environment that mimics real-world scenarios. This includes tool-use, where the model learns to interact with different tools to achieve its goals, and verifiers, which act as judges to evaluate the correctness of the model’s outputs.

This process is crucial for tasks that require reasoning, problem-solving, and decision-making. It enables Kimi K2 to perform complex tasks like code generation, where the model must not only understand the syntax but also the underlying logic and intended function of the code.

Kimi K2’s Performance on Key AI Benchmarks

The true test of any AI model is its real-world performance. The Kimi K2 technical report includes results from several AI benchmarks, where the model has demonstrated exceptional capabilities in tasks such as code generation, mathematical problem solving, and logical reasoning.

LiveCodeBench v6

On the LiveCodeBench v6, a popular coding benchmark, Kimi K2 achieved a pass rate of 53.7% pass@1. This puts it on par with the best models currently available, including closed-source options, and demonstrates its capability in real-world coding applications.

AIME 2025 (Mathematics Benchmark)

For mathematical reasoning, Kimi K2 performed admirably on the AIME 2025 benchmark, scoring 49.5%. This is a significant achievement in the field of AI, where even traditional symbolic reasoning models struggle.

Applications of Kimi K2: Real-World Use Cases

Given its advanced capabilities, Kimi K2 has the potential to be applied in a wide range of industries. Some of the most notable use cases include:

-

Code Generation and Debugging: As demonstrated by its performance on LiveCodeBench, Kimi K2 is an ideal tool for developers seeking to automate code generation, debugging, and testing.

-

Mathematical Problem-Solving: Kimi K2’s impressive performance on mathematical benchmarks makes it useful in education and research, particularly in fields requiring complex calculations and proofs.

-

Autonomous Decision-Making: With its reinforcement learning capabilities, Kimi K2 could be used in areas such as robotics, autonomous vehicles, and AI-powered decision-making systems.

Conclusion

The Kimi K2 technical report is a comprehensive exploration of a revolutionary model in the AI space. From its Mixture-of-Experts (MoE) architecture to its innovative training techniques and impressive performance on real-world benchmarks, Kimi K2 is set to reshape how AI is developed and deployed.

By making this advanced AI model open-source, Moonshot AI has democratized access to cutting-edge technology, enabling businesses, researchers, and developers to harness its power for a variety of applications. As the AI landscape continues to evolve, Kimi K2 represents a significant milestone in the quest for more intelligent, autonomous systems.

FAQs

Q1: What is the Kimi K2 model?

The Kimi K2 model is a Mixture-of-Experts (MoE) large language model developed by Moonshot AI. It has 1 trillion parameters and excels in tasks like coding, mathematics, and reasoning.

Q2: How is Kimi K2 different from other AI models?

Kimi K2 uses MoE architecture to activate only a subset of its parameters, making it more efficient while still offering powerful performance on complex tasks. It also incorporates reinforcement learning for autonomous problem-solving.

Q3: What are some real-world applications of Kimi K2?

Some potential applications of Kimi K2 include code generation, mathematical problem-solving, and autonomous decision-making in industries like software development, education, and robotics.

Q4: Is Kimi K2 available for public use?

Yes, Moonshot AI has made the Kimi K2 model open-source, allowing developers, researchers, and businesses to use and innovate upon it.

Leave a Reply