Building AI applications doesn’t have to break the bank or require expensive subscriptions. With Vercel’s generous free Hobby plan, developers can create, deploy, and scale AI-powered applications without spending a dime on upgrades. This comprehensive guide reveals how to build AI apps for free quickly no upgrade Vercel while maximizing the platform’s capabilities and working within its limitations.

The rise of AI development has democratized access to powerful machine learning capabilities, but many developers assume they need expensive hosting plans to deploy AI applications. Vercel challenges this assumption by offering robust infrastructure, AI-specific tools, and generous free tier limits that make AI development accessible to everyone from solo developers to small teams testing innovative concepts.

Understanding Vercel’s Free Tier for AI Development

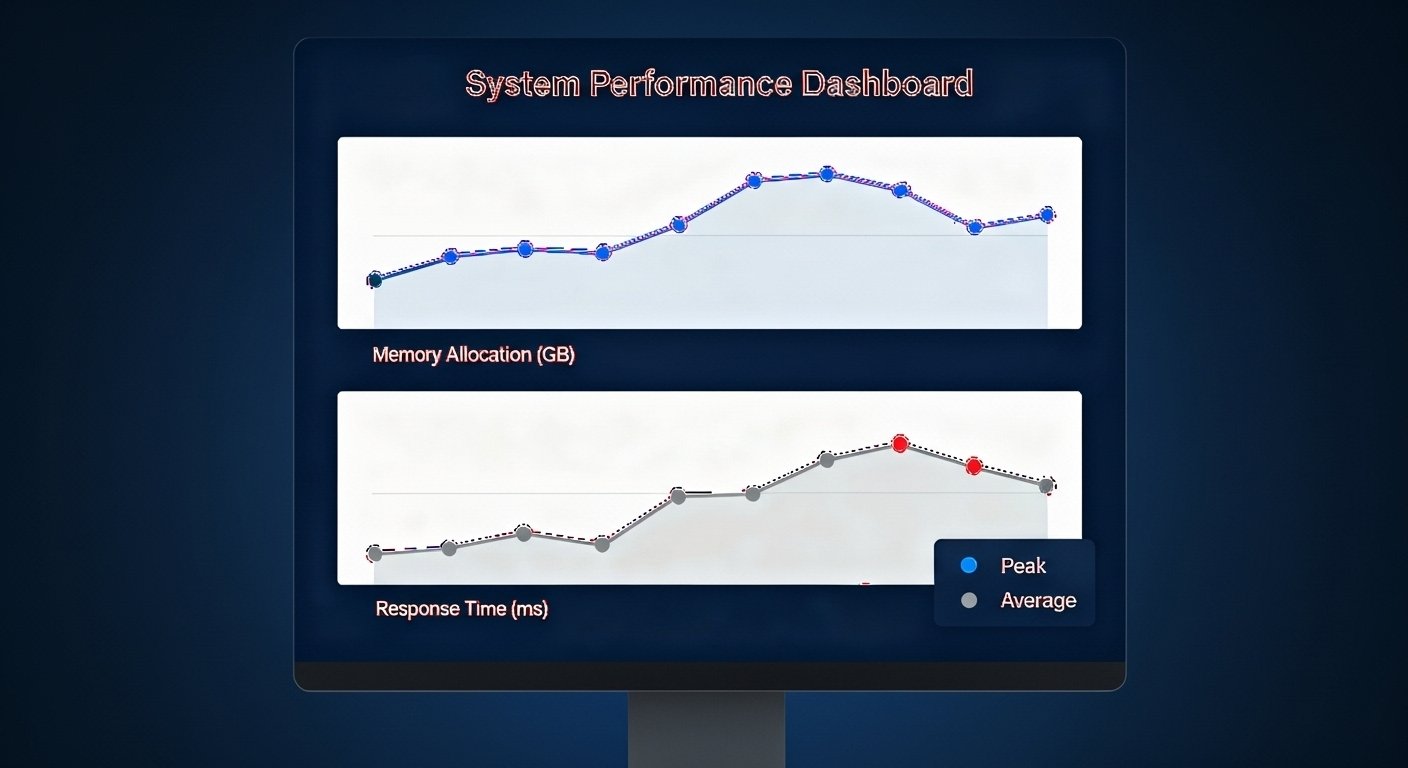

Vercel’s Hobby plan provides substantial resources that make it ideal for AI application development. The free tier includes 1 million function invocations per month, 4 hours of active CPU time, 360 GB-hours of provisioned memory, and 100 GB of fast data transfer. These generous limits allow developers to experiment extensively with AI features without worrying about immediate upgrade pressures.

The platform’s infrastructure automatically handles scaling, caching, and global distribution through its Content Delivery Network (CDN). This means AI applications built on Vercel’s free tier benefit from enterprise-grade performance optimization without the associated costs. The global edge network ensures users worldwide experience fast response times, regardless of their geographic location.

Key Free Tier Resources for AI Apps

-

Function Invocations: 1 million monthly executions for serverless functions

-

Active CPU Time: 4 hours of processing power per month

-

Memory Allocation: 360 GB-hours of provisioned memory

-

Data Transfer: 100 GB of fast data transfer monthly

-

Build Time: 100 hours of build execution time

-

Concurrent Builds: Single build at a time

-

Projects: Up to 200 projects per account

Leveraging Vercel’s AI-Specific Features

The Vercel AI SDK: Your Free Development Toolkit

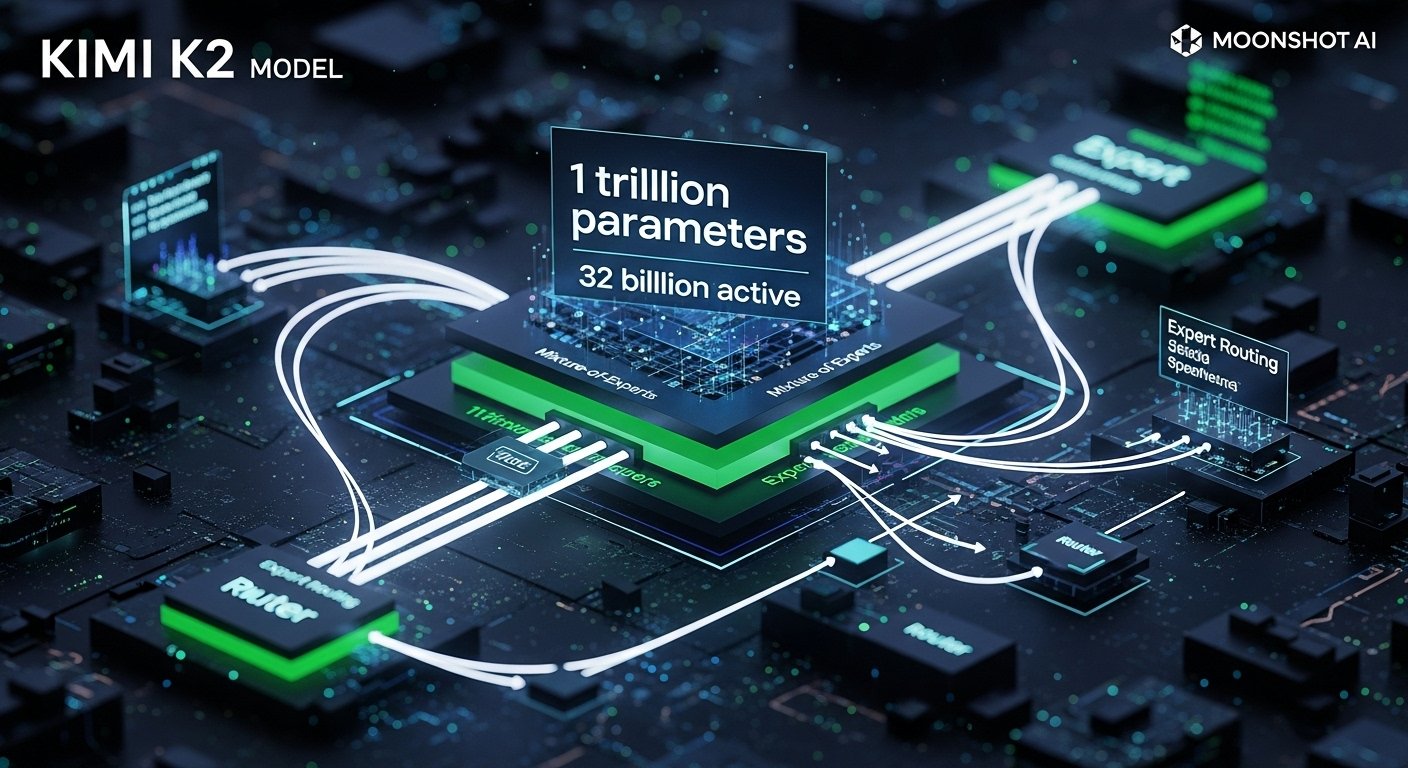

The Vercel AI SDK represents one of the most valuable free resources for AI developers. This open-source toolkit provides framework-agnostic tools for building AI applications with unified APIs for text generation, embeddings, and tool calls. The SDK supports streaming responses, structured outputs, and seamless integration with popular AI models from various providers.

The SDK’s streaming capabilities enable real-time user interactions without consuming excessive resources. Instead of waiting for complete AI responses before displaying results, applications can stream partial responses as they’re generated, creating more responsive user experiences while optimizing resource usage within the free tier limits.

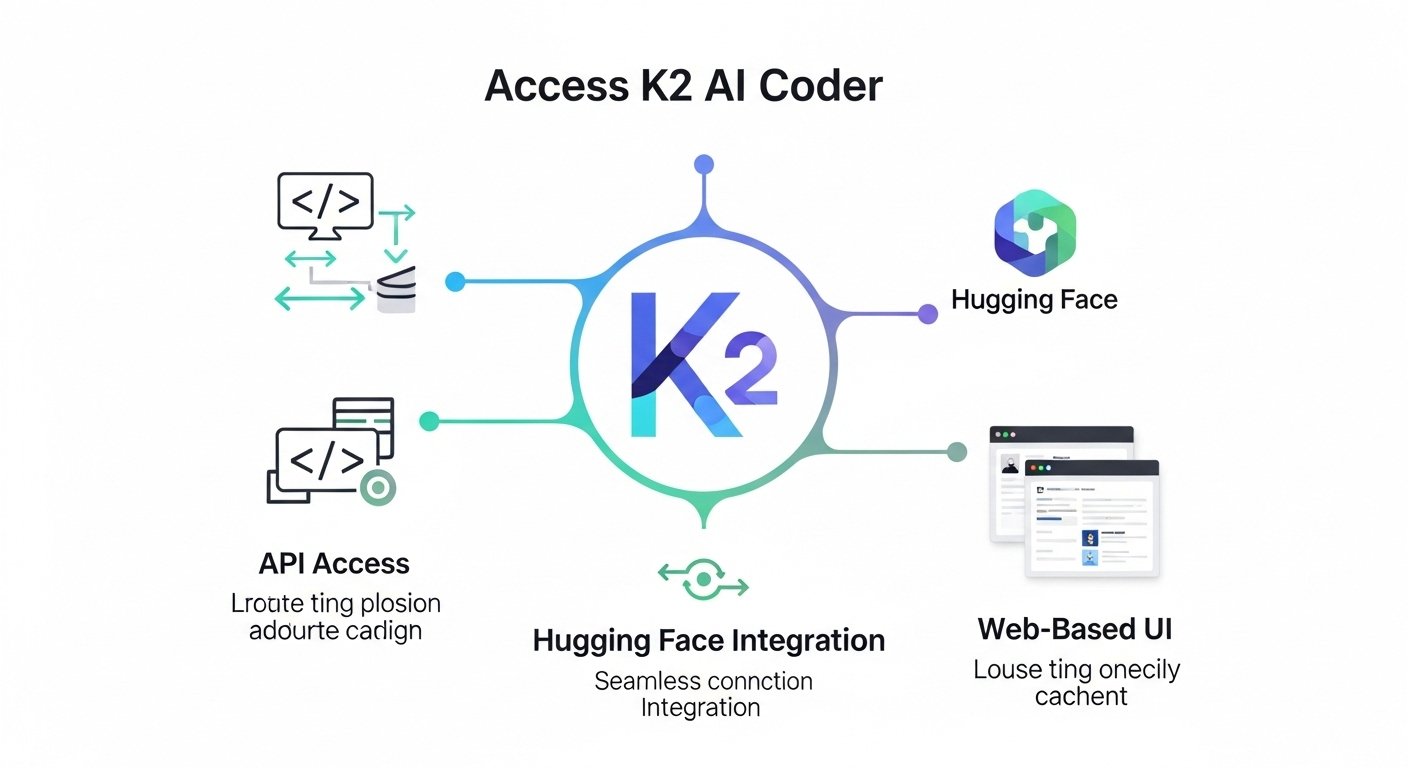

AI Gateway: Access to 100+ Models

Vercel’s AI Gateway provides alpha access to approximately 100 AI models without requiring individual API keys or provider accounts. During the alpha period, usage remains free with rate limits based on the Hobby plan tier. This eliminates the complexity of managing multiple AI provider relationships while enabling developers to experiment with different models and capabilities.

The Gateway handles authentication, usage tracking, and will eventually manage billing across providers. For developers building AI apps for free quickly no upgrade Vercel, this represents significant value by removing barriers to AI model experimentation and reducing the technical overhead of provider management.

Critical Limitations to Navigate

Function Timeout Constraints

The most significant limitation for AI development on Vercel’s free tier is the 10-second function timeout. This constraint severely impacts AI processing capabilities, as most complex AI operations require longer execution times. Model inference, particularly for large language models or image generation, often exceeds this limit.

Understanding this limitation is crucial for architectural decisions. Successful AI applications on the free tier must design around quick response patterns or implement asynchronous processing strategies that work within the timeout constraints.

Resource Management Strategies

When free tier limits are reached, Vercel pauses projects until the next 30-day cycle begins. Unlike paid plans, there’s no overage support, making resource monitoring essential for maintaining application availability. Developers must implement efficient caching, optimize function execution, and monitor usage patterns to avoid unexpected service interruptions.

Optimization Techniques for Maximum Efficiency

Static Generation and Caching

Implementing Static Site Generation (SSG) wherever possible reduces function invocations and CPU usage. Pre-generating AI-powered content during build time rather than runtime can significantly extend the effective capacity of the free tier. This approach works particularly well for AI applications that generate content based on predictable patterns or datasets.

Proper caching headers ensure repeated requests for similar AI responses are served from the edge cache rather than triggering new function executions. This strategy can dramatically reduce resource consumption while improving response times for end users.

Bundle Size Optimization

Minimizing function bundle sizes reduces cold start times and memory usage. Using dynamic imports for AI libraries and models ensures only necessary code loads for each function execution. This optimization becomes particularly important given the memory constraints of the free tier.

Tree shaking and careful dependency management help maintain lean function bundles. Developers should audit their AI application dependencies regularly to ensure they’re not including unnecessary libraries that consume precious memory resources.

Edge Function Utilization

Vercel’s Edge Functions run closer to users and consume fewer resources than traditional serverless functions. For AI applications requiring simple processing or routing logic, Edge Functions provide better performance within free tier constraints. They’re particularly effective for pre-processing user inputs before sending them to more resource-intensive AI operations.

Real-World AI Application Examples

Lightweight Chat Applications

Simple AI chatbots that handle basic queries work well within the free tier limitations. By implementing response caching and optimizing prompt engineering to generate concise responses quickly, developers can create functional chat interfaces that operate efficiently within the 10-second timeout window.

Content Enhancement Tools

AI applications that improve or analyze existing content, such as grammar checkers or content summarizers, often fit well within free tier constraints. These applications typically process smaller text chunks and can provide valuable functionality without requiring extensive computational resources.

Image Analysis and Tagging

Basic image analysis applications using services like Google Vision API or similar providers can operate effectively on the free tier. By optimizing image sizes and implementing efficient caching strategies, developers can create useful image processing applications without hitting resource limits.

Development Best Practices

Efficient Prompt Engineering

Crafting concise, effective prompts reduces AI model processing time and token consumption. Well-engineered prompts that generate focused responses help applications stay within timeout limitations while providing valuable functionality. This practice becomes essential when building AI apps for free quickly no upgrade Vercel.

Asynchronous Processing Patterns

Implementing queue-based or webhook-driven processing patterns allows applications to handle longer AI operations by breaking them into smaller, timeout-compliant chunks. This approach requires careful architecture but enables more sophisticated AI functionality within free tier constraints.

Progressive Enhancement Strategies

Building applications that gracefully degrade when resource limits are reached ensures better user experiences. Implementing fallback responses or cached results helps maintain functionality even when real-time AI processing isn’t available due to resource constraints.

Monitoring and Analytics

Usage Tracking Implementation

Implementing comprehensive usage tracking helps developers understand resource consumption patterns and optimize accordingly. Monitoring function execution times, memory usage, and invocation patterns provides insights for improving application efficiency and avoiding unexpected limit breaches.

Performance Optimization Metrics

Tracking key performance indicators such as response times, cache hit rates, and user engagement metrics helps developers identify optimization opportunities. These metrics become particularly important for maintaining good user experiences within free tier resource constraints.

Alternative Strategies and Hybrid Approaches

Client-Side Processing

Implementing AI functionality that runs in users’ browsers can reduce server-side resource consumption. Technologies like TensorFlow.js enable sophisticated AI capabilities without consuming Vercel function resources. This approach works particularly well for real-time processing requirements that might otherwise exceed timeout limitations.

Third-Party Service Integration

Integrating with third-party AI services that offer their own free tiers can extend the effective capabilities of applications hosted on Vercel’s free plan. By combining multiple free service tiers, developers can create more sophisticated AI applications without upgrading any individual service.

Scaling Considerations and Future Planning

When to Consider Upgrading

While this guide focuses on building AI apps for free quickly no upgrade Vercel, understanding upgrade triggers helps with long-term planning. Applications consistently hitting timeout limitations, exceeding monthly resource quotas, or requiring team collaboration features may benefit from paid plans.

The Pro plan ($20/month) increases function timeout to 60 seconds and provides 10x the resource quotas. This upgrade becomes worthwhile when free tier limitations significantly impact user experience or development velocity.

Migration Strategies

Planning for potential future migrations helps maintain application flexibility. Building applications with provider-agnostic architectures and avoiding Vercel-specific dependencies ensures smoother transitions if scaling requirements eventually exceed free tier capabilities.

Conclusion

Building AI apps for free quickly no upgrade Vercel is not only possible but practical for many use cases. The platform’s generous free tier, combined with powerful AI development tools and optimization strategies, enables developers to create sophisticated AI applications without financial barriers. Success requires understanding limitations, implementing efficient architectures, and optimizing for resource constraints, but the results can be remarkable.

The key to success lies in working with, rather than against, the platform’s constraints. By leveraging static generation, implementing efficient caching, optimizing bundle sizes, and designing around timeout limitations, developers can build AI applications that provide significant value while operating entirely within the free tier. The combination of Vercel’s infrastructure, AI SDK, and Gateway access creates an environment where innovation thrives regardless of budget constraints.

Whether you’re a solo developer exploring AI concepts, a student working on projects, or a startup validating AI-powered ideas, Vercel’s free tier provides the foundation for bringing AI applications to life quickly and efficiently. The platform’s evolution continues to expand possibilities for free tier users, making it an increasingly attractive option for AI development without upgrade pressures.

FAQs

Q1: Can I build production AI applications on Vercel’s free tier?

A: Yes, but with limitations. The 10-second function timeout and monthly resource limits make the free tier suitable for lightweight AI applications, prototypes, and low-traffic production apps. Complex AI processing or high-traffic applications typically require paid plans.

Q2: What happens when I exceed the free tier limits?

A: Vercel pauses your projects until the next 30-day billing cycle. There’s no overage billing on the free tier, so monitoring usage is essential to maintain application availability.

Q3: How many AI models can I access through Vercel’s AI Gateway?

A: The AI Gateway provides access to approximately 100 AI models from various providers during its alpha phase. This access is currently free with rate limits based on your plan tier.

Q4: Is the Vercel AI SDK completely free to use?

A: Yes, the Vercel AI SDK is completely free and open source. You can use it with any AI provider or infrastructure, not just Vercel’s services.

Q5: Can I monetize applications built on Vercel’s free tier?

A: Vercel’s terms allow commercial use of free tier applications as long as you stay within the usage limits. However, successful monetized applications often outgrow free tier constraints quickly.

Q6: What’s the difference between Vercel’s Edge Functions and regular serverless functions for AI apps?

A: Edge Functions run closer to users and consume fewer resources, making them better for simple AI processing tasks. Regular serverless functions provide more computational power but count against your CPU and memory quotas more heavily.

Leave a Reply